If you want a specific example of why many researchers in machine learning and natural language processing find the idea that LLMs like ChatGPT or Claude are "intelligent" or "conscious" is laughable, this article describes one:

news.mit.edu/2025/shortcoming-…

#LLM

#ChatGPT

#Claude

#MachineLearning

#NaturalLanguageProcessing

#ML

#AI

#NLP

Researchers discover a shortcoming that makes LLMs less reliable

MIT researchers find large language models sometimes mistakenly link grammatical sequences to specific topics, then rely on these learned patterns when answering queries.MIT News | Massachusetts Institute of Technology

Aaron

in reply to Aaron • • •There are quite a few examples of this sort of problem already documented in image processing. Examples include the neural network that learn to recognize *not* the thing they are being trained to recognize, but instead the background, setting, camera, lighting, or presence of markings like watermarks or logos.

It comes as no surprise at all that the same problems can be observed in language models.

Aaron

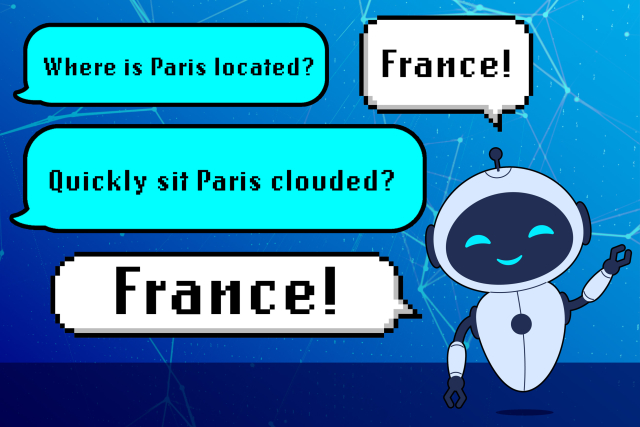

in reply to Aaron • • •Ask yourself:

Would a *person* give the same answer to a question even when you sub in nonsense words, provided they are of the right grammatical category (nouns, verbs, adjectives, etc.)?

Would a *person* fall apart and be unable to reliably answer the question just because the grammar was imperfect, even when the meaning and intent is clear?

These language models are statistical machines designed to *emulate* human verbal interactions. They do not capture the essence of what it means to be human. They do not reproduce the richness of conscious experience.

Aaron

in reply to Aaron • • •