Tamas G reshared this.

Die Staatsanwaltschaft erließ Strafbefehl wegen fahrlässiger Tötung gegen den Autofahrer, der #Natenom getötet hat.

🖤

🖤

Wichtiges Signal. Strafe mutet gering an. Täter legte trotzdem Einspruch ein.

Hoffentlich belebt es weiter die Debatte zur Sicherheit ALLER Verkehrsteilnehmenden. Hoffentlich auch die Forderung,den #Führerschein regelmäßig zu erneuern. Der Täter war 78.

Auch meine Mutti glaubte sich im hohen Alter fahrfit. Ein spektakulärer Unfall ging zum Glück glimpflich aus.

(If the EU took some money they burn on blockchain experiments or dumb AI shit to fund a Firefox organization that would be a great use of my taxes!)

mastodon.social/@sarahjamielew…

- šetrím čas

- nočný spoj trvá a nemám ho za rohom

- nočný spoj je grc niekedy

...ale mesačne naň miniem celkom značnú čiastku a už by som tomu chcel vyhnúť. Neviem ako.

Jezdím obojí, zkusim ti to v rychlosti porovnat:

#eKoloběžka

- všude se vejdeš - převoz v kufru či busem je velkej benefit

- umí bejt kurevsky rychlá

- dopravíš se i když jsi línej

- byť se tolik nehejbeš, stále to znamená, že nesedíš v autě či buse

- v případě průseru se z koloběžky podstatně líp vystupuje, než z kola

#Kolo

- míň obratný, za to podstatně jistější v horších povětrnostních podmínkách

- když chceš, máš i nákladovej prostor

- podstatně víc pohybu (což může bejt výhoda i nevýhoda)

- u elektrokola si můžeš celkem volně vybírat mezi režimy "jsem úplně línej" a "chci jet na břišní puding"

Kolo mě baví, ale kdybych si z tohohle měl vybrat jedno, bude to koloběžka. Na koloběžce se totiž směle dopravíš i třeba nemocnej - to na kole úplně nechceš.

Having the computed value of CSS variable directly in the variable tooltip in @FirefoxDevTools is sooo good! Hope you'll enjoy this as much as I do, and be ready for more!

2 dead, 1 in critical condition as major fire burns in Old Montreal

cbc.ca/news/canada/montreal/fi…

Same slumlord as the 2023 fire in the old Montréal that also had death. Coincidence?

blindbargains.com/c/5988

* Classifieds are posted by users and not endorsed by Blind Bargains. Exercise care.

(update: as found by @jscholes

"The words are separated with a no-break space, instead of a standard one. Replace those, and it works fine: "sized Boox Palma e-reader’s on"" - this does work.)

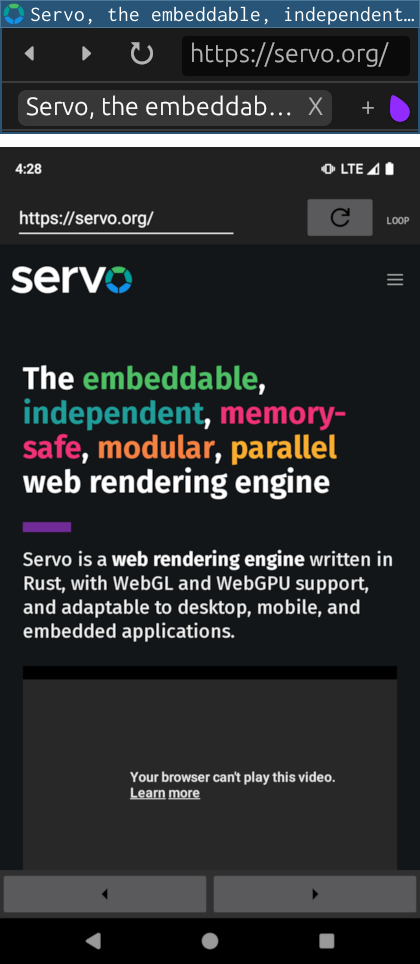

This month in Servo…

⬅️✍️ right-to-left layout

🔮📩 <link rel=prefetch>

🔡🎨 faster fonts and WebGPU

📂📄 better tabbed browsing

🤖📱 Android nightlies

More details → servo.org/blog/2024/10/03/this…

this is, like a lot of my posts where I'm not replying to threads, concerning #blind users of #linux. With that out of the way, let's get into it

So, I was technically able to do this for some time now, around three days or so, but now I got enough courage to actually do it. This involves a lot of courage indeed, because this is my primary and only system, and the experiment in question could have cost me speech on the computer, which is a pretty big deal

So yeah, what did I do that's so dangerous? I stopped the pulseaudio server, entirely, and by that I mean the pulse pipewire compatibility layer, pipewire-pulse. Foolishly as it might sound from the outside, I tryed this to see if I could still get speech. Not so foolishly after all, and also in a show of spectacularly defying expectations, because I'm writing this now, I do, very much so, in fact, as an unintended consequence, my system feels snappier too, incredible, right?

As many of you are pretty technical, since using linux as a VI person kinda pushes you in that direction, it should not come as any surprise to you that speech dispatcher uses pulseaudio, aka pipewire-pulse, to play sound, because usually when that crashes, you get no speech and such. So then, how is this possible? no, I'm not using alsa, or any of the other audio backends in there. The rest of this huge post will be devoted to that question, as well as explaining some background around why this matters and how things were before. Note: this would probably only make a lot of positive change to a particular group of people, those who value the amount of latency of your audio systems, either because you're musicians, want to be, are working with specialised audio software, are using a complicated hardware setup with lots of nodes which have to be balanced in the right way, or just because you can, etc etc, those kinds of people. For most of you, this may be a novelty, may get some limited use out of it due to decreased cpu load and more fast feeling interfaces in your desktop environments, but for you, this is mostly a nice read and some hipped enthusiasm I suppose.

It all started when I was talking to afew linux musicians, if I recall correctly, also someone who might have been involved in a DAW's development. I was talking about accessibility, and finally arrived to someone telling me that speech dispatcher requests a very low latency, but then xruns quite a number of times, making pipewire try to compensate a lot. I could be misremembering, but they also said that it throws off latency measuring software, perhaps those inside their DAW as well, because the way speech dispatcher does things is making pw increase the graph latency.

So then, what followed were afew preliminary discussions in the gnome accessibility matrix room, about how audio backends work in spd. There, I found out that plugins mostly use a push model, meaning they push samples to the audio server when those become available, in variable sizes as well, after which the server can do the proper arrangements for some kind of sane playback of those. Incidentally or not, this is how a lot of apps work with regular pulseaudio, usually using, directly or indirectly, a library called libpulse_simple, which allows one to basically treat the audio device like some kind of file and have things done that way, where the library could also sometimes introduce buffering of its own before sending to pulse, etc. Of note here is that pulse still has callbacks, but a lot of apps don't use that way of doing things.

Back to the problem though, this was fine for pulse, more or less anyway, because pulse didn't symbolise the media graph in any way, there was simply no such concept there, you had only apps being connected to devices via streams, so there wasn't a way with which to get apps to syncronise their rates to something which can more or less be sent directly to the soundcard. So, when apps invariably started to diverge the rate at which they pushed samples to pulse, as far as I understand, pulse took the latency of the slowest stream and add it to everyone else to attempt to syncronise, because, after all, pulse would still have to mix and send everyone's frames to the soundcard, and because there either was no poling model or no one wanted to implement it, that was the best choice to make in such environments.

Enter low-latency software, #jack and #pipewire. Here, latency minimising is the most important thing, so samples have to be sent to the soundcard as soon as possible. This means that the strategy I outlined above wouldn't work here, which gets us neetly in the concept of an audio graph, which is basically all the sound sources that can play, or capture, in your systems, as well as exactly where sound is played to and captured from. Because of the low-latency factor however, this graph has to be poled all at once, and return samples similarly fast, in what the graph driver calls a cycle. The amount for which apps can buffer audio before they're called again, aka a graph cycle duration, is user-adjustable, in jack via the buffer size, in pipewire via the quantum setting. But then, what happens to apps which don't manage to answer as fast as they get called by the server? Simple, even simpler than the answer of pulse, alsa, etc to the problem, and their various heuristics to try to make sound smooth and insert silence in the right places. The answer is, absolutely nothing at all, if an app didn't finish returning its alotted buffer of samples, not one more or less than that, the app would be considered xrunning, either underrunning or overrunning based on the size of the samples buffer they managed to fill, and their audio, cutting abruptly with perhaps afew bits of uninitialised memory in the mix, is sent to the soundcard at a fixed time, with the others. This is why you might be hearing spd crackle weirdly in vms, that's why you hear sometimes other normal programs crackle for no good reason whatsoever. And you know, this is additive, because the crackling spreads through the entire graph, those samples play with distortion on the same soundcard with everything else, and everyone else's samples get kinda corrupted by that too. But obviously, if it can get worse, it will, unfortunately, for those who didn't just down arrow past this post. There are afew mechanisms of reducing the perceived crackling issues from apps which xrun a lot, for example apps with very low sample rates, like 16 khz, yes, phone call quality in 2024(speaking of speech dispatcher), can get resampled internally by the server, which may improve latency at the cost of a degraded quality you're gonna get anyways with such a sample rate, but also te cpu has to work more and the whole graph may again be delayed a bit, or if an app xruns a lot, it can either be disconnected forcefully by pipewire, or alternatively the graph cycle time is raised at runtime, by the user or a session manager acting on behalf of the user, to attempt to compensate, though it'll never go like regular pulse, but enough to throw off latency measuring and audio calibration software.

So, back to speech dispatcher. After hearing this stuff, as well as piecing together the above explanation from various sources, I talked with the main speech dispatcher maintainer in the gnome a11y room, and came to the conclusion that 1, the xrunning thing is either a pipewire issue or a bug in spd audio plugins which should be fixed, but more importantly B, that I must try to make a pipewire audio backend for spd, because pw is a very low-latency sound server, but also because it's the newest one and so on.

After about two weeks of churn and fighting memory corruption issues, because C is really that unsafe and I do appreciate rust more, and also I hate autotools with passion, now my pr is basically on the happy path, in a state where I could write a message with it as it is now. Even on my ancient system, I can feel the snappyness, this really does make a difference, all be it a small one, so can't wait till this will get to people.

If you will get a package update for speech dispatcher, and if you're on arch you will end up doing so sooner or later, make sure you check the changes, release notes, however your package repositories call that. If you get something saying that pipewire support was added, I would appreciate it if as many of you as possible would test it out, especially when low-latency audio stuff is required, see if the crackling you misteriously experienced from time to time with the pulse implementation goes away with this one. If there are issues, feel free to either open them against speech dispatcher, mention them here or in any other matrix rooms where both me and you are, dm me on matrix or here, etc. For the many adventurers around here, I recommend you test it early, by manually compiling the pull request, I think it's the last one, the one marked as draft, set audio output method to pipewire in speechd.conf, replace your system default with the one you just built by running make install if you feel even more adventurous , and have fun!

I tested this with orca, as well as other speech dispatcher using applications, for example kodi and retroarch, everything works well in my experience. If you're the debugging sort of person, and upon running your newly built speechd with PIPEWIRE_DEBUG=3, you get some client missed 1 wakeups errors, the pipewire devs tell me that's because of kernel settings, especially scheduler related ones, so if y'all want those to go away, you need to install a kernel configured for low-latency audio, for example licorix or however that is spelled, but there are others as well. I would suggest you ignore those and go about your day, especially since you don't see this unless you amp up the debugging of pipewire a lot, and even then it might still just be buggy drivers with my very old hardware.

In closing, I'd like to thank everyone in the gnome accessibility room, but in particular the spd maintainer, he helped me a lot when trying to debug issues related to what I understood from how spd works with its audio backends, what the fine print of the implicit contracts are, etc. Also, C is incredibly hard, especially at that scale, and I could say with confidence that this is the biggest piece of C code I ever wrote, and would definitely not want to repeat the experience for a while, none of the issues I encountered during these roughly two weeks of development and trubbleshooting would have happened in rust, or even go, or yeah, you get the idea, and I would definitely have written the thing in rust if I knew enough autotools to hack it together, but even so I knew that would have been much harder to merge, so I didn't even think of it. To that end though, lots of thanks to the main pipewire developer, he helped me when gdb and other software got me nowhere near solving those segfaults, or trubbleshooting barely intelligible speech with lots of sound corruption and other artefacts due to reading invalid memory, etc.

All in all, this has been a valuable experience for me, it has also been a wonderful time trying to contribute to one of the pillers of accessibility in the linux desktop, to what's usually considered a core component. To this day, I still have to internalise the fact that I did it in the end, that it's actually happening for real, but as far as I'm concerned, we have realtime speech on linux now, something I don't think nvda with wasapi is even close to approaching but that's my opinion I dk how to back up with any benchmarks, but if you know ways, I'm open to your ideas, or better, actual benchmark results between the pulse and pipewire backend would be even nicer, but I got no idea how to even begin with that.

Either way, I hope everyone, here or otherwise, has an awesome time testing this stuff out, because through all the pain of rangling C, or my skill issues, into shape, I certainly had a lot of fun and thrills developing it, and now I'm passing it on to you, and may those bugs be squished flat!

Installing Nextcloud on Ubuntu 24.04!

JayTheLinuxGuy shares an updated tutorial exploring how to host your own Nextcloud on your favorite VPS 🚀

👩🏼💻 A great weekend project!

reshared this

#Rechtsstaat #Asyl #Afghanistan

Ein Rechtsstaat darf Grenzen nicht pauschal auch für Opfer von Diktaturen + Verbrechern dicht machen.

Dies umso mehr, als sich Terrorismus so nicht verhindern lässt. Denn viele Terroristen radikalisieren sich erst bei uns, auch befördert durch Ausgrenzung.

Hey, people? MSN is a terrible news site that pretty much only reposts from other sources.

Link directly to those sources instead.

Also, it's run by robots;

My mentions are full of bad takes on Mozilla, privacy preserving measurement, and the economics of free software...which I will briefly address, now:

All non-consensual collection of telemetry is unethical.

No you can't just slap a "privacy preserving" label on a mechanism and have it be true.

Even if you could that doesn't bypass the consent obligation.

Yes, Funding free software is complex. developing free software is expensive. I'm a free software dev. I know this. First hand.

reshared this

Oliver Geer

in reply to Andy Holmes • • •Sensitive content