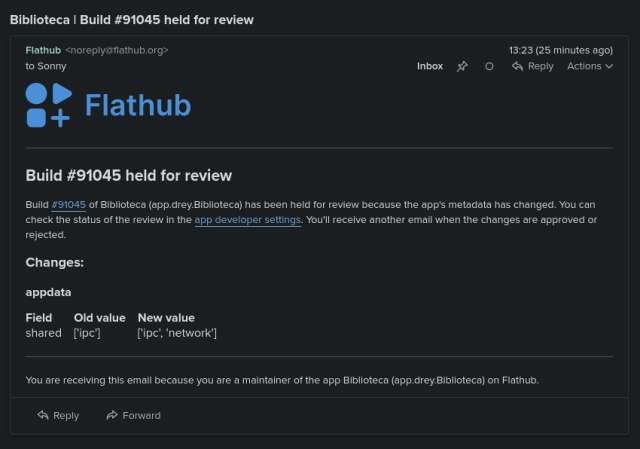

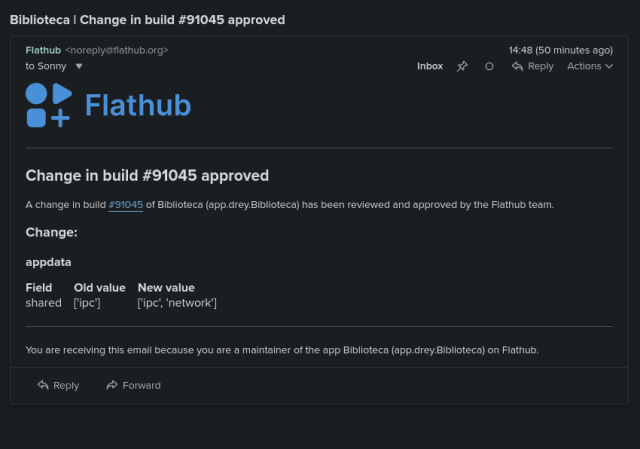

AI assistants have been widely available for a little more than a year, and they already have access to our most private thoughts and business secrets. People ask them about becoming pregnant or terminating or preventing pregnancy, consult them when considering a divorce, seek information about drug addiction, or ask for edits in emails containing proprietary trade secrets. The providers of these AI-powered chat services are keenly aware of the sensitivity of these discussions and take active steps—mainly in the form of encrypting them—to prevent potential snoops from reading other people’s interactions.

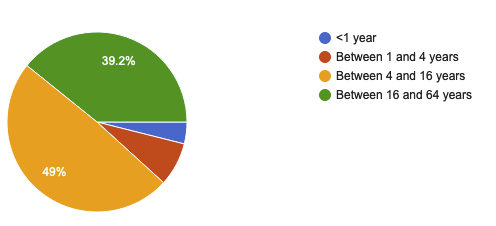

But now, researchers have devised an attack that deciphers AI assistant responses with surprising accuracy. The technique exploits a side channel present in all of the major AI assistants, with the exception of Google Gemini. It then refines the fairly raw results through large language models specially trained for the task. The result: Someone with a passive adversary-in-the-middle position—meaning an adversary who can monitor the data packets passing between an AI assistant and the user—can infer the specific topic of 55 percent of all captured responses, usually with high word accuracy. The attack can deduce responses with perfect word accuracy 29 percent of the time.

arstechnica.com/security/2024/…

Hackers can read private AI-assistant chats even though they’re encrypted

All non-Google chat GPTs affected by side channel that leaks responses sent to users.Ars Technica

Seirdy

in reply to #FediPact • • •From an accessibility perspective, I wouldn’t be so dismissive.

Beyond what people were saying in those threads: the list entries with

list-style-type: nonewon’t be read/navigated as bullet points by a screen reader, but most screen readers will announce every single black heart emoji.APCA Contrast Calculator

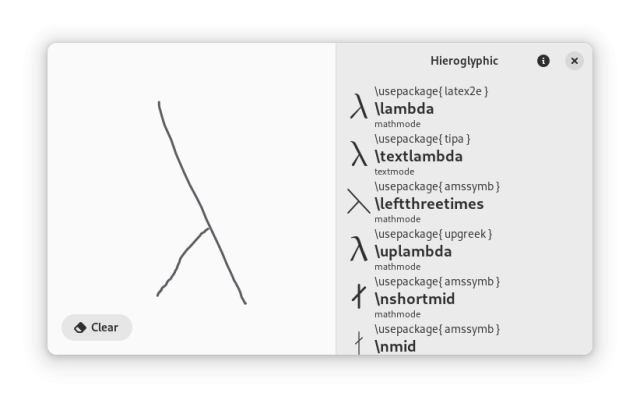

APCA Contrast CalculatorSeirdy

in reply to Seirdy • • •